Once upon a time in a Mendocino Redwood Forest a slice of humanity decided to camp together and find ways to make technology work better for people. I had the good fortune to thrive and soak in this environment.

I sponsored this (and past) conferences because I believe that a better future will emerge from global visionaries teamed up with builders. These are the seeds that will yield results months and years from now.

To my surpise, some seeds have sprouted! It was a few years ago when I drug Nathan Schneider to the Internet Archive. I saw a direct connection between cooperatives and decentralization. Today the concept of "exit to community" is real, and Nathan is talking policy with luminaries like Lawrence Lessig.

Another seedling is Resonate Cooperative. This plucky music streaming service has evolved since my first involvement in 2016 and now has some serious support from Cooperative Jackson alums. Rich and Brandon are great stewards, and they made connections that will prove valuable in the future!

The People

Many past connections were renewed and strengthened over a meal or s'mores. Wendy, Brewster, Brian, Nick, Christina, Mai, Joahchim, Primavera, Liz, Danny, Jay, Emily, Tracey, Ross -- you are all incredible people. And here's to the many new connections, too many to mention. I connected with my Weaver group, the Mesh team, Amber, Jack, Christine, Lia, Koh, Jessy, and a bunch more. So many fantastic connections, in many beautiful liminal spaces including the 10ft fire pit, 24/7 Coffee, Hackers Movies, Dancing to DJ sets, button making, hikes among the trees and stargazing.

Sessions and More

While not congregating there were all sorts of great ways to converge on the important topics of decentralization. I have most of what I attended on my schedule, but here's some highlights to give you a taste.

Hack. the. Planet

My portable projector, glo-totems and movie screen allowed me to introduce the classic movie Hackers to many.

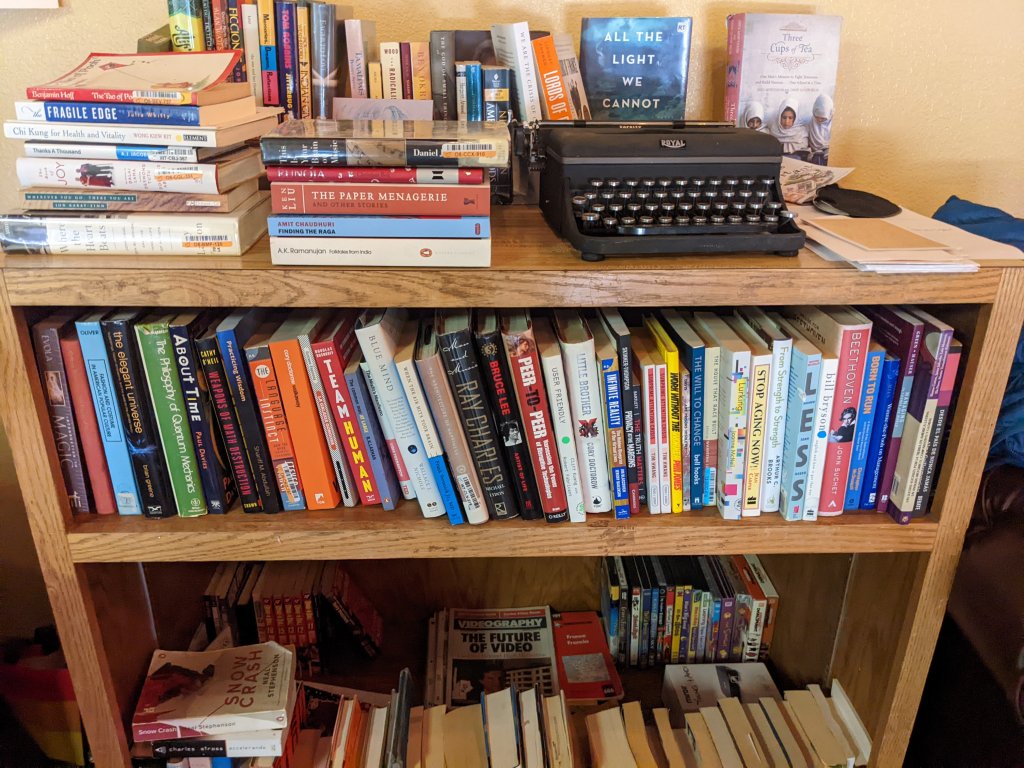

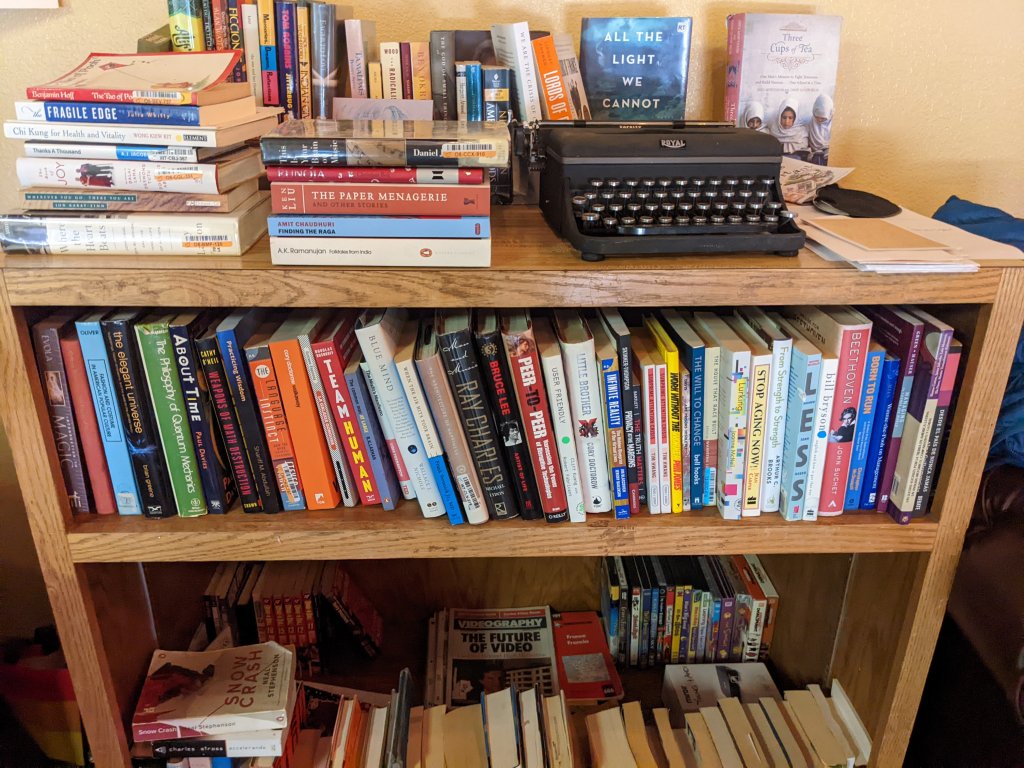

Library

Each attendee was asked to give two books and take two books from the library. This was brilliant. I took a photo of all the books and have an instant reading list for the next year and beyond.

Interplanetary Timekeeping

A fascinating session about governing a timekeeping system on the moon and beyond presented by Jessy Kate Schingler.

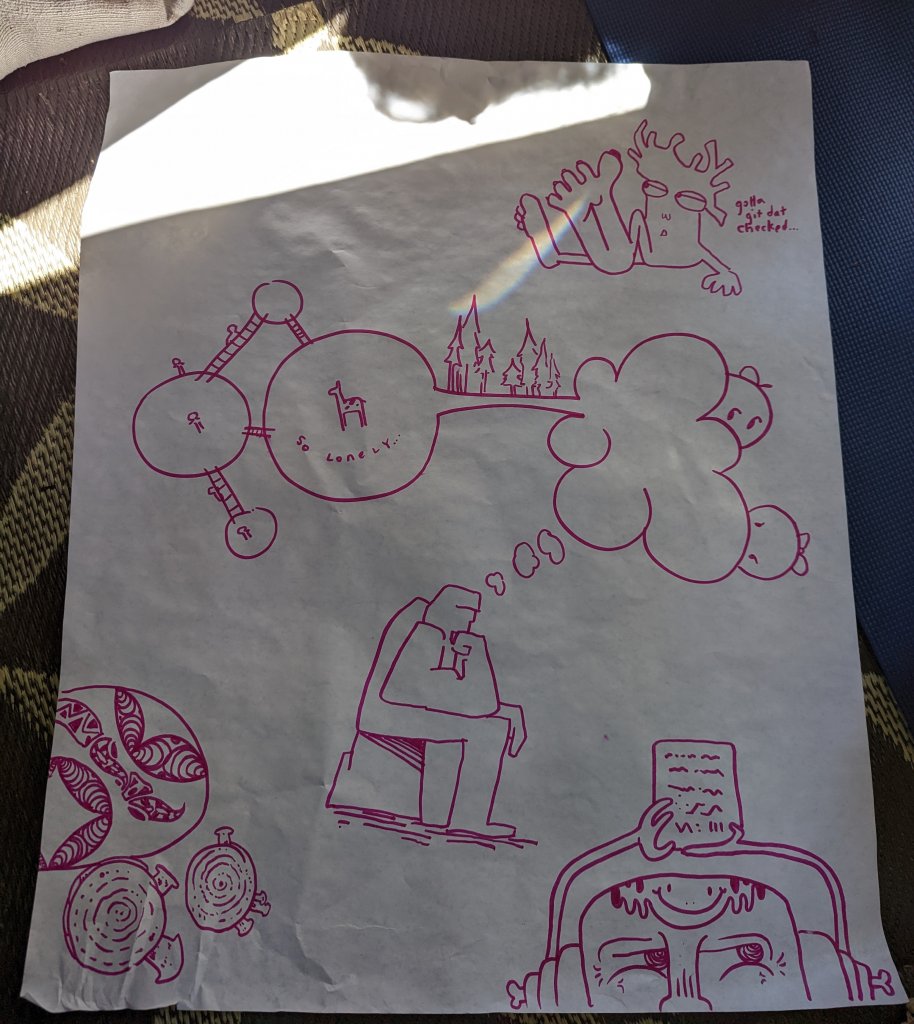

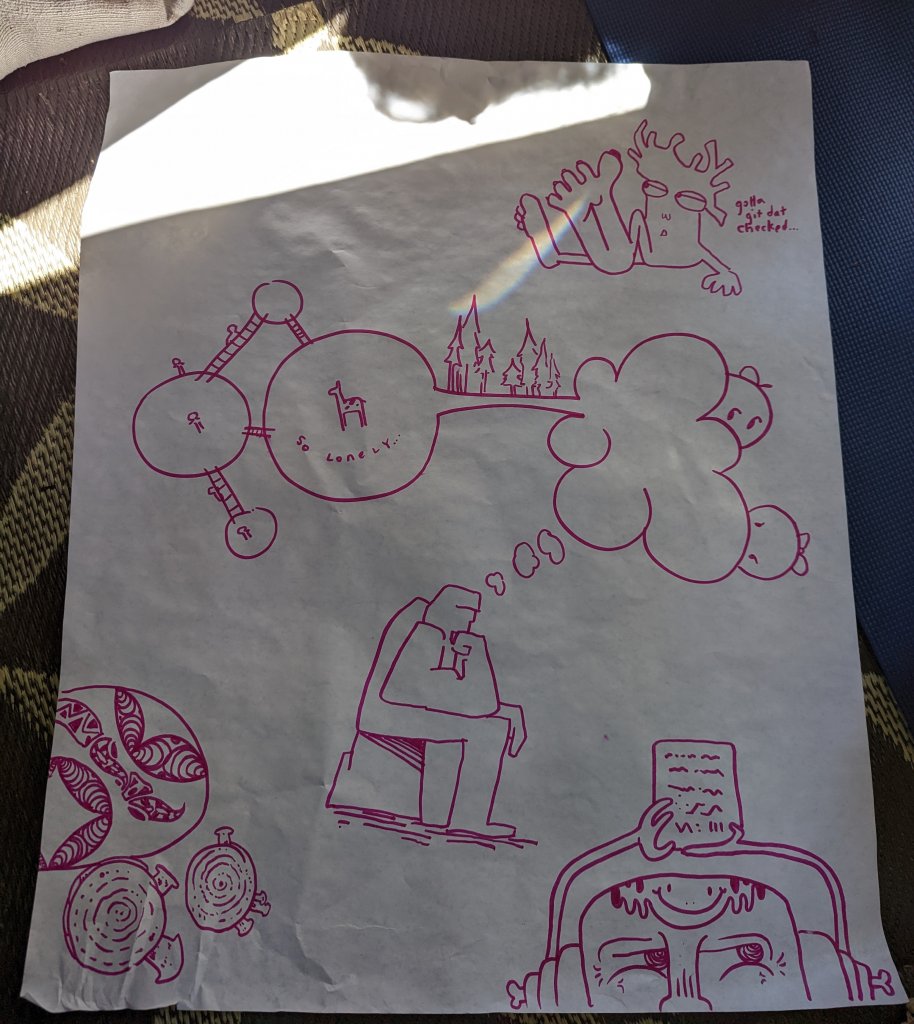

Systems Mapping Governance

Christina Bowen, the master of visualizing complex systems and stock and flow diagrams made this into a rich dive into how systems interact on multiple days. I spent a lot of time in these sessions and am so glad I did!

A Governance Layer for the Internet / the Four Forces that Regulate the Internet

The first of this three-day set of sessions went deep with some great thinkers and participation of the entire group. It's hard to summarize this, it went to many places. But I believe that the focus on turning the abstract into reality was there.

Lawrence Lessig also presented the Four Forces which will be familiar to anyone that's read his books. It was a good way to see things direct from his perspective.

Solidarity in practice: The story of Digital Democracy and Mapeo

It was refreshing to see a fully realized decentralized, offline first application used to help the people of the Amazon realize their own rights and express use technology for their local needs. We need more Mapeos!

Peer Based Social Science in the Wild

Zarinah Agnew and Jessy Kate Schingler had us all survey ourselves about self-governance and allowed us to experience ethnographic research directly. I have my 'token' of completion allowing me to interact with the DAO. Understanding what people need and how they interact is key to finding systems that work for the most people.

Bad Apple

Lisa Rein from the Aaron Schwartz project showed how you can build a system to process internal police public records to keep communities safer.

Proof Mode

Jack Fox was on hand to present Proof Mode and described how this mechanism for turning photos into signed evidence was used by activists in the rainforest to provide irrefutable proof that they live in the areas slated for oil exploration. It was inspiring to see math and technology aimed at a specific, on the ground problem.

Policy, Governments and Tornado Cash

Koh, Danny O'Brien and some others took advantage of the free time to set up a super engaging conversation about how deentralization intersects with government policy. Many of us (myself included) had discussed much of the same at DEFCON 30 a few weeks prior. I was able to contribute a little bit to the discussion. The conversation flowed quickly and I think that some good ideas about using norms and industry coordination to address these issues may prove fruitful. I'm excited to see the followup from the connections made at this event that emerged from the soup!

Connecting with the Earth and Indigenous Practices

Connecting the decentralization movement with indigenous practices and rights was a joy to behold. I appreciated the speakers on the topics and the conversations with many of the Dweb fellows from around the world. Remembering that technology connects with the earth was a good reminder that we are stewards of the land and the technology ecosystems. For the water ceremony I brought Oakland condensed fog.

Art Art and more Art!

Typewriter Tarts had an installation in the library that was amazing! I hope I can help them get some of their work published. The Name-tag/Button making station was amazing, magazines were provided to cut and paste into your own individual creation. Sessions on how Art can intersect with Decentralized Services were plenty. I attended a good breakout with Barry Thew from Gray Area and Victoria Ivanova who guided us through thinking about how art and technology might evolve in the current environment and what needs to change. As usual the participants came up with a plethora of ideas. I hope to see some of this published soon!

Farewells

And just like that it was over. Due to a conflicting schedule I had to skip the last day. I had breakfast, packed up my projector and said my goodbyes. I'm already planning for a Brazil version and for 2023, and hope to see the garden grow from the seeds planted here. It was a magical time and brought back memories of early Gopher conferences and other early formative Internet events. May the ripples spread out and become waves!

![[image of a van mirror reflecting a vivid sunset with a palm tree lined highway]](https://1500wordmtu.com/file/0100c1b25970516a62816c49c141b46c/PXL_20220312_020631253.jpg)